Last Week in Kubernetes Development

Stay up-to-date on Kubernetes development in 15 minutes a week.

Week Ending December 23, 2018

Community Meeting

No meeting this week or next week due to the holidays. We look forward to seeing you all again on January 3rd for the first meeting of 2019!

Release Schedule

Next Deadline: 1.14 cycle starts, January 2nd

We’re still in 1.14 team formation and setup. The release team is looking solid and they are working on any needed policy changes before the official 1.14 kick-off, watch for updates on the mailing list.

Featured PRs

#70875: Enable kustomize in kubectl

Support for the Kustomize YAML manipulation tool has been added to kubectl apply. This will only activate if the folder you are in has a kustomization.yaml file, but if so then you’ll automatically get the YAML patches applied to your files before upload. Check out the Kustomize docs for more information on the tool.

For those that have not used Kustomize, it’s an alternative (or sometimes even an addition to) templating tools like Helm and Ksonnet which allows for editing YAML documents with patches and overlays rather than direct changes. This allows for things like per-team and per-app customizations of shared base objects.

#70344: consolidate node deletion logic between kube-controller-manager and cloud-controller-manager

This PR creates a new cloud/node_lifecycle controller to share logic between in-tree and out-of-tree cloud plugins. This will help avoid mismatched behavior as more clusters switch to out-of-tree plugins, and ensures that critical deletion logic remains in-tree where we can all keep an eye on it.

#72239: Promote Lease API to v1

A follow up to last week’s default enabling of the new node heartbeat subsystem, the Lease API which powers it is now GA. While this is immediately useful for node heartbeats, it can also be used by other code. The hope is that eventually all users of the LeaderElection module in client-go can switch over to the Lease API instead.

#71355: Make kube-proxy service implementation optional

A short patch but potentially very useful for large sites which make heavy use of service mesh tools like Istio. If you add a service.kubernetes.io/service-proxy-name annotation to a service (the value doesn’t matter as long as the annotation is present) then kube-proxy will ignore the service and its endpoints. The API for the service will be unaffected, but no underlying proxy rules will be configured. If these were already redundant with the proxy provided by your service mesh and your clusters are very large or under heavy churn (or both) this could save quite a few CPU cycles.

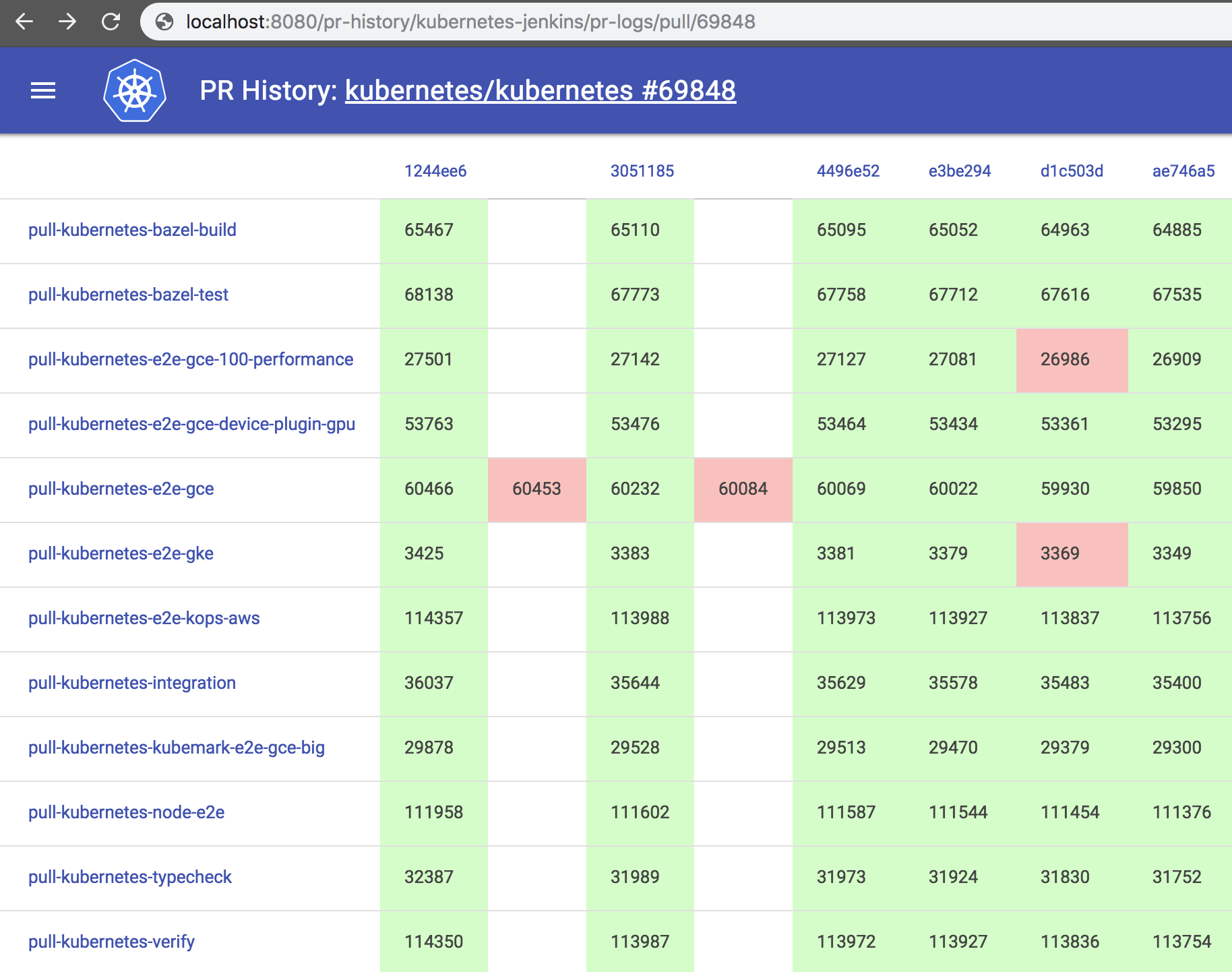

k/test-infra#10169

And finally an awesome new visualization from @ibzib, a display of all the Prow jobs related to a given PR. You can check out the view for this PR itself as an example.

Other Merges

- #72038: Extract old manually vendored GCE libraries in favor of normal vendoring

- #71978: Move predicate types from

pkg/scheduler/algorithmtopkg/scheduler/algorithm/predicates - #70866: Reduce Azure API calls by replacing the current backoff retry with SDK’s backoff

- k/test-infra#10141: Pubsub Subscription Support in Prow

- k/community#3017: shyamjvs taking over as SIG-Scalability chair

Version Updates

Last Week In Kubernetes Development (LWKD) is a product of multiple contributors participating in Kubernetes SIG Contributor Experience. All original content is licensed Creative Commons Share-Alike, although linked content and images may be differently licensed. LWKD does collect some information on readers, see our privacy notice for details.

You may contribute to LWKD by submitting pull requests or issues on the LWKD github repo.